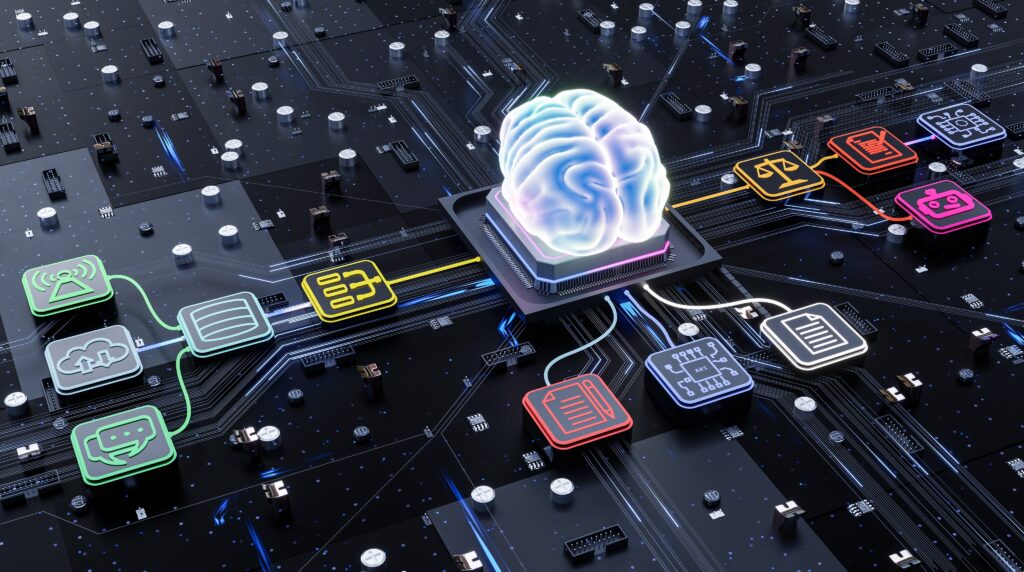

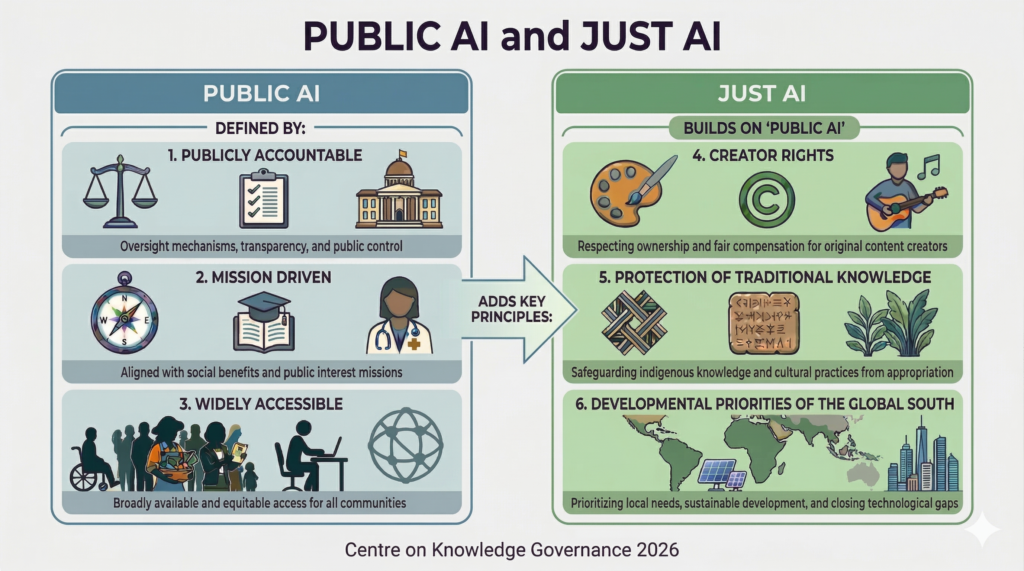

The WTO’s 14th Ministerial Conference (MC14) starts in Yaoundé, Cameroon, next week with a packed agenda and real stakes. Buried in the long list of negotiations is a decision that will have a significant impact beyond trade: whether to renew the moratorium on non-violation complaints under the TRIPS Agreement. The outcome will help determine whether the TRIPS flexibilities and exceptions, particularly copyright exceptions, which have recently become the backbone of the AI economy, can be challenged at the WTO. Two Moratoriums, One Bargain Since 1998, WTO members have supported a temporary moratorium on customs duties on electronic transmissions, including software downloads, streamed content, and digital services. That moratorium has been extended at every Ministerial Conference since. It is up for renewal again at MC14, where the United States (US) is pushing to make it permanent. The moratorium originated at the 1998 WTO Ministerial in Geneva, where members adopted a Work Program on E-commerce and committed to “continue their current practice of not imposing customs duties on electronic transmissions” (WTO 1998). Critically, the term “electronic transmissions” was never defined. That ambiguity allowed the scope of the moratorium to expand alongside the digital economy, covering an ever-wider range of digital content and services without any fresh multilateral agreement. Since then, the US has been embedding the moratorium in its bilateral free trade agreements. The US-Jordan FTA in 2000 was the first agreement to include a binding commitment not to impose customs duties on electronic transmissions. Recent agreements on reciprocal trade (ARTs) go further and require countries to support multilateral adoption of a permanent moratorium on customs duties on electronic transmissions at the WTO. All these efforts build a web of bilateral obligations that formalize the current push for a permanent multilateral moratorium at MC14. Less discussed but just as consequential is a second moratorium: the freeze on non-violation and situation complaints under the TRIPS Agreement. The moratorium on the TRIPS non-violation and situation complaints (NVC) has also been extended at each Ministerial Conference since 1995. Under TRIPS Article 64, a WTO member can file a non-violation complaint even when no TRIPS rule has been broken, claiming only that expected benefits have been “nullified or impaired” by another member’s measures. Non-violation claims create a significant IP weapon: they mean that a country’s copyright exceptions, fair use, limitations for research and education, patentability requirements, and compulsory licenses could, in principle, be challenged at the WTO not for violating TRIPS but for frustrating the commercial expectations of foreign rightsholders. Any TRIPS measure that allegedly nullifies or impairs benefits under TRIPS may, under certain conditions, be challenged through a non-violation complaint (e.g., on the theory that it frustrates a member’s legitimate expectations). In principle, this creates a pathway to challenge a wide range of legitimate public-interest policies that affect rightsholders. Such policies could include, among others, rules on patentability, compulsory licensing, and copyright limitations and exceptions, including the US fair use doctrine. US copyright law includes a variety of specific exceptions, but fair use is the oldest and the most broadly applicable of all US exceptions to copyright infringement. As IP scholar Frederick Abbott warned as early as 2003, “non-violation causes of action could be used to threaten developing Members’ use of flexibilities inherent in the TRIPS Agreement and intellectual property law more generally. Thus, for example, Members that adopt relatively generous fair use rules in the fields of copyright or trademark might find that they are claimed against for depriving another.” The two moratoriums have been traded as a package. Developing countries seeking the TRIPS NVC moratorium, which protects domestic policy space in health, access to knowledge, education, and technology transfer, have had to support the e-commerce moratorium, which benefits US digital platforms. Each Ministerial Conference is, in effect, another round of that exchange. If the e-commerce moratorium becomes permanent at MC14, as the US proposes, the key question is what developing countries receive in return, particularly on the TRIPS NVC side. Significance of Copyright Exceptions Many key internet functions rely on copyright limitations and exceptions. Search engines cache and index content without negotiating individual licensing agreements; search previews display short snippets; CDNs buffer and transmit protected works; cloud services store user-uploaded copyrighted files. According to the CCIA’s 2025 report, fair use industries now account for 18 percent of US GDP, $4.9 trillion in value added, and $10.2 trillion in revenues in 2023, employing one in seven American workers. Within that broader figure, AI-related fair use industries alone generated $1.7 trillion in revenues in 2023, up 78 percent since 2017. The AI industry has added a new dimension. Training large language models requires access to vast quantities of text, books, articles, web pages, and code repositories. Much of that access has been broadly justified under fair use, which is transformative and serves a new purpose. In that sense, AI companies and the broader data economy are the newest dependents on copyright exceptions. If those limitations and exceptions can be challenged through non-violation complaints at the WTO, bypassing the question of whether they infringe TRIPS, the legal foundation for AI training could become globally contestable. The Buenos Aires Lesson At the Buenos Aires Ministerial Conference in December 2017, during Donald Trump’s first term, the renewal of both moratoria on the e-commerce and TRIPS NVC was uncertain. Both moratoria were eventually extended. That Buenos Aires episode revealed, or at least made visible, that the fair use and safe harbor exceptions underpinning internet commerce were potentially vulnerable to non-violation challenges. There was a growing awareness among US tech industry stakeholders of how much the TRIPS NVC moratorium mattered to their legal operating environment. The two moratoriums were treated as a package. That understanding should be stronger today. AI companies are actively navigating copyright litigation in domestic courts, whose outcomes are still unresolved. Exposure via non-violation complaints at the WTO would add a second front. What was at stake in 2017 is now more visible and more significant. What’s Next The argument is pretty straightforward. If the US